On this page

PromptMetrics v1.0.2: The Production-Ready Prompt Registry

Move your LLM apps from prototype to production with PromptMetrics v1.0.2. Explore our secure, self-hosted prompt registry with a new Web UI and Python SDK.

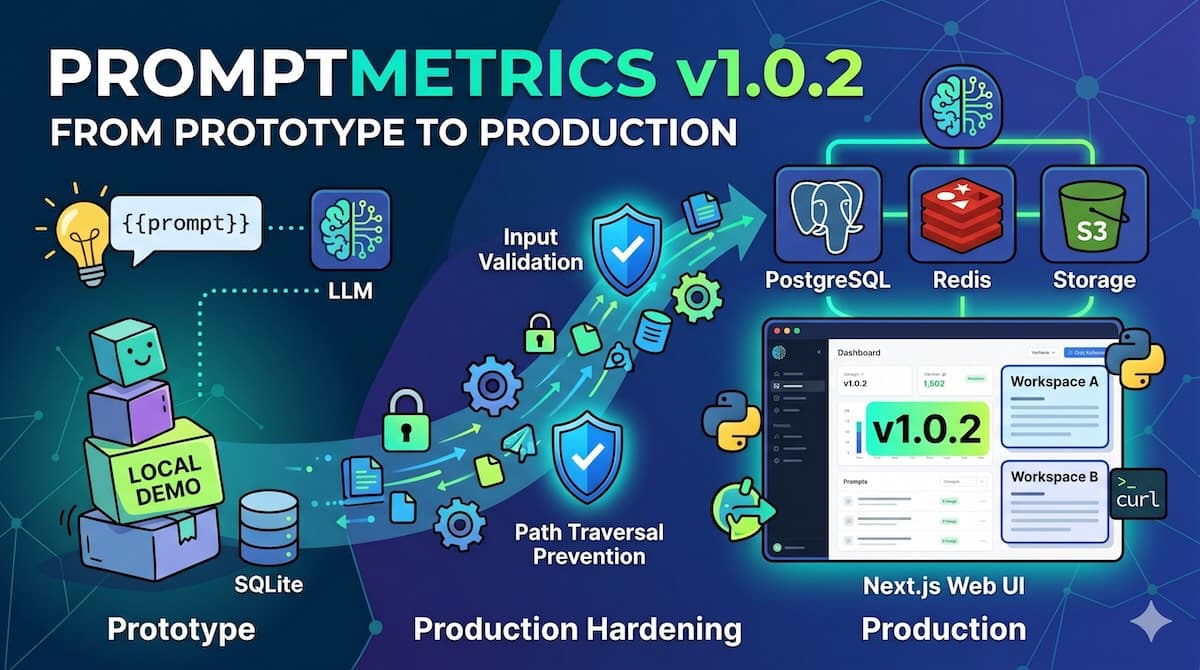

PromptMetrics v1.0.2: From Prototype to Production

Every prompt registry looks amazing in a local demo. It’s when the security questionnaire lands on your desk, when a prompt change silently degrades your production output, or when two teams suddenly need isolated workspaces that you find out whether a tool is a toy or actual infrastructure.

PromptMetrics v1.0.2 is our answer to the question we've heard most from early users: "Can we actually run this in production?"

We built PromptMetrics as a lightweight, self-hosted registry that treats prompt content as code, not data. We wanted versioning, metadata logging, and observability without getting locked into a vendor or paying monthly SaaS fees. Today, we're shipping version 1.0.2, the release that elevates PromptMetrics from a handy single-node registry to a production-grade platform you can confidently run alongside the rest of your infrastructure.

The Theme: Earning Your Trust

Version 1.0.2 is fundamentally about trust. Our early adopters proved the core concept—that you can version prompts, sync them from GitHub, and trace every LLM call—but production environments demand a lot more. They demand defense in depth, horizontal scalability, and tenant isolation.

This release follows a very deliberate arc: first, earn trust through security hardening, then earn adoption through enterprise readiness. If v1.0.0 proved that prompts deserve version control, v1.0.2 proves that version control deserves proper production infrastructure.

Security Hardening (The Stuff That Lets You Sleep)

Self-hosting gives you total control, but control requires vigilance. In 1.0.2, we systematically reviewed and closed gaps that could turn a prompt registry into an attack vector. These fixes aren't glamorous, but they are exactly what unblocks an engineering team from actually deploying.

Path traversal prevention: The

FilesystemDriverandGithubDriverNow strictly sanitize all user-supplied paths before they ever touch the OS. A malformed relative path can no longer climb out of its designated directory.Strict input validation: The

EvaluationControllernow enforces rigid Joi schemas on every payload. Malformed requests bounce at the door before they get anywhere near your business logic.Squashed rate-limiter race conditions: We wrapped the SQLite counter increment inside atomic

db.transaction()blocks. Rapid concurrent requests used to slip past the limit; now the counter stays dead accurate, even under heavy burst loads.Failing closed on webhooks: The GitHub webhook handler is now completely hardened. If it

GITHUB_WEBHOOK_SECRETis missing, the endpoint outright rejects the request. We ripped out the oldGITHUB_TOKENfallback because a webhook endpoint that accepts unsigned traffic isn't a webhook endpoint—it's a liability.Plugged metadata leaks: We stripped out an overzealous

console.log(JSON.stringify(...))in theLogControllerThat was accidentally leaking LLM metadata to stdout.SQL injection prevention: Dynamic

tableNameandcolumnNamevalues are now aggressively validated against/^[a-z_][a-z0-9_]*$/ibefore any SQL interpolation happens. Your database schema stays exactly where it belongs: under your control.

Feature Highlights

Evaluation Framework

Before 1.0.2, measuring prompt quality basically meant shipping to production and waiting to see if users complained. That's not a great workflow. Now, you can create, score, and manage prompt evaluations through a dedicated REST API. You can attach numeric scores, labels, and metadata to any prompt version, turning subjective "this feels worse" feedback into hard, reproducible metrics.

Bash

curl -X POST http://localhost:3000/v1/evaluations \

-H "Content-Type: application/json" \

-H "X-API-Key: $API_KEY" \

-d '{

"prompt_name": "summary_v3",

"version": "1.0.0",

"score": 0.94,

"metadata": {

"model": "gpt-4.1",

"dataset": "bbc_news_1k"

}

}'

You can finally treat prompt regressions the same way you treat code regressions: catch them before they reach your users.

Web UI Dashboard

Managing prompts through curl And raw JSON is great for automation, but human operators actually need to see what's going on. The new Next.js dashboard gives you a unified interface to browse prompts, inspect logs and traces, review evaluation runs, manage labels, and tweak settings. Your PMs can finally audit prompt versions without filing Jira tickets, and your SREs can inspect traces without grepping through raw log files.

The Python SDK

Hand-rolling HTTP clients for internal tools is tedious and prone to breakage. The new Python SDK (over in clients/python/) exposes the entire PromptMetrics API surface with native methods and types. Install it, authenticate once, and start versioning prompts straight from your training pipelines or Jupyter notebooks. It turns an afternoon of writing boilerplate into a five-minute import.

Production-Ready Infrastructure

Running a single SQLite instance on your laptop is fantastic for a proof of concept, but you don't want to run production traffic on it.

Bring your own DB: Swap

DATABASE_URLto a PostgreSQL connection string, and the application automatically switches backends.Redis integration: Point

REDIS_URLto a Redis cluster to unlock LRU caching for prompt lookups and distributed sliding-window rate limiting (complete withRetry-Afterheaders).Durable Storage: Need object storage? Set the

DRIVERenvironment variable tos3and store your prompt artifacts on any S3-compatible service.Multi-tenancy: For teams managing multiple products or customers, the new multi-tenancy layer isolates workspaces via the

X-Workspace-Idheader. One tenant's prompts will never bleed into another's namespace.

Combined, this means you can slot PromptMetrics comfortably behind your existing load balancers and identity providers without doing architectural backflips.

Moving the Vision Forward

PromptMetrics was born from a simple belief: prompts deserve the same rigor as the code that calls them. Our vision has always been to be the "Git for prompts." But code without CI, access control, and observability is just text sitting in a file. Version 1.0.2 adds the vital platform layer that makes this vision actually viable for teams shipping real products.

Under the hood, you now have:

Circuit breakers on GitHub API calls

Per-API-key rate limiting with expiration dates

An async audit-log queue for compliance

A rock-solid migration system powered by Umzug

Live OpenAPI documentation served at

/docs

This is what "Git for prompts" looks like at scale. It's versioned, observable, secure, and entirely under your own roof.

Get Started Today

Ready to stop treating your prompts like second-class artifacts?

Bash

npm install -g promptmetrics

promptmetrics-serverAlternatively, pull the Docker image, set your DATABASE_URL, and open up the dashboard. If you're upgrading from an earlier version, restart with the new environment variables (check the updated configuration guide for details).

Start the project on GitHub to keep up with the roadmap, and definitely open an issue if you hit a snag. We built PromptMetrics so you can truly own your prompt layer—v1.0.2 makes that ownership safe, scalable, and complete.